« December 2006 | Main | February 2007 »

January 31, 2007

the hookup culture

Those who are responsible for adolescents and college students are worried about the "hookup culture." Quite a few young people have sex frequently with different partners within the same large social network. Their choice not to repeat sex with the same partner seems deliberate, probably a way to avoid commitment.

I think the hookup culture is new and troubling. It's an example of treating other people merely as means, not as ends in themselves. Participants must constantly estimate their own desirability as sexual partners based on their success in the hookup market. That seems stressful and likely to cause pathologies, such as eating disorders. Because we have a natural proclivity to become emotionally connected to our sexual partners, people who "hook up" are likely to use drugs or alcohol to suppress those feelings. Finally, these young people are missing an opportunity to practice intimacy. I worry that when they actually try to settle down with a partner and start a household or a family, they won't be good at it.

No doubt, this phenomenon has several causes. I suspect that one of them is economic. Young people are coming of age at a time of high risk and high opportunity, when they feel that they need lots of "human capital" to compete in the job market. They want as many experiences and awards as they can obtain to list on their resumes. Human entanglements don’t help, and an emotional relationship might hold someone back.

The deepest problem may be that successful students now do everything (studying, volunteering, exercise, and sex) for instrumental reasons: to prepare themselves for the moment when they graduate from their final level of college. I worry that they are going to find young adulthood very disappointing, and they will regret missing the chance to do things for their own sake.

I would guess that quite a few participants in the hookup culture (especially young women) are actually rather discontented with it. But it would take collective action and reflection to change that culture. Adults are poorly placed to take this on, because our questions are likely to be seen as censorious. (Or we may seem to be challenging freedom and sexual equality.) Although we should stand ready to help, the Millennials themselves will have to deal with this problem, much as previous generations changed gender roles and sexual mores.

[PS See the Washington Post's excerpt from Laura Sessions Stepp's new book Unhooked, which includes this quote from a young woman who is afraid to fall in love:

Her number-one goal, for as long as she could remember, was to excel in school so that she might someday land a great job that would make her financially independent. In high school, she maintained an A average, played volleyball and rowed crew, edited the digital yearbook and played on a church basketball team that won the state championship. Her pace in college was similarly brisk, and she didn't see how, even in her senior year, she could afford to invest time, energy and emotion in a loving relationship.This concern for financial independence (not affluence) seems symptomatic of a high-risk/ high-opportunity economy that provides weak social supports.]At her 21st birthday party she talked about this with a girlfriend who understood. As the friend said, over the recorded sounds of rapper Jay-Z, "I don't have time or energy to worry about a 'we.' "

Posted by peterlevine at 4:40 PM | Comments (2) | TrackBack

January 30, 2007

benefits of service-learning and student government

(Durham, NC): My colleagues and I argue that civic experiences in adolescence make young people into active, effective, and responsible citizens--participants in politics and civil society. However, most students, parents, teachers, administrators, and policymakers are less concerned about civic education than about getting kids successfully through high school and college. Their priorities are understandable. One third of adolescents do not graduate from high school, and those who drop out face very bad prospects. Thus I'm delighted to announce new research showing that two civic experiences--service-learning and student government--substantially increase the odds that students will complete high school and college on time. Presumably, we can enhance adolescents' motivations and sense of connection to school by giving them opportunities to serve and lead. (These are results from two new CIRCLE working papers by Alberto Dávila and Marie T. Mora.)

Posted by peterlevine at 7:41 AM | Comments (0) | TrackBack

January 29, 2007

lordy, lordy

(en route to Durham, NC). Yesterday, I turned forty. Since I happen to have a sick parent right now, "intimations of mortality" would be an understatement. Last time I noticed, my contemporaries were deciding what advanced degrees to pursue, looking for spouses and partners, and wondering whether our elders were paying any attention to us. Now we worry about our own kids, our parents, and our savings; and we are the elders in some of our institutions. All my Gen-X friends seem to live in grown-ups' houses and understand grown-ups' issues like 401(k)s, renovations, and dinner parties. We used to play at adult roles; now we inhabit them. Each day, the world seems exactly like yesterday, but a "heap" of days passes and life is completely different.

Posted by peterlevine at 7:35 AM | Comments (0) | TrackBack

January 26, 2007

efficiency versus "receptivity" in politics

I'm just back from a meeting on how to mobilize young people to vote. Techniques for that purpose are becoming increasingly sophisticated. In the 1990s, you might just mail people flyers reminding them to register. Then organizations began to test various messages with focus groups before they printed their flyers. Now they do true experiments, randomly selecting some addresses to receive one flyer instead of another and keeping track of the response rates. (The most effective messages often perform worst in focus groups.) This is just an example of growing efficiency in public-interest or nonpartisan politics. The end--maximizing the number of voters between the age of 18 and 25--is generally taken for granted.

I should emphasize that my organization, CIRCLE, has funded and organized such experiments and collected the results in a user-friendly document (pdf).

Meanwhile, back in my hotel room, I was reading Romand Coles' article entitled "Moving Democracy: Industrial Areas Foundation Social Movements and the Political Arts of Listening, Traveling, and Tabling" (Political Theory, 2004). Coles argues that democracy means more than participating and expressing opinions (e.g., by voting). He emphasizes listening or "receptivity." Listening is a source of power--you can strengthen your networks by being attentive to others. But it is also an ethical stance; it opens the possibility that you might need to change your ends, your interests, and even your identity. Coles goes on to argue that literally listening is not enough. You have to move your body to other people's environments. "It is very easy, when the other [person] is speaking from a place--or places--you have never inhabited nor experienced them inhabiting, to shed inadvertently all too many of their words, expressions, and gestures, or to fail to absorb their depth, register their weight, and taste them, or to dismiss them altogether." By "tabling," Coles refers to the practice of literally moving the discussion table around from venue to venue within a community.

Sitting at the table at Wingsread, looking at data on dollar spent per vote cast, I was constantly struck by the contrast.

Posted by peterlevine at 8:59 AM | Comments (0) | TrackBack

January 25, 2007

a growing class gap and the transition to adulthood

(Milwaukee) Life has changed substantially since 1975 for middle-class young people. Life has changed much less--and in less favorable ways--for working-class kids. The result is a growing class gap that influences the way that people become citizens.

For children of the middle class, the first two and a half decades of life are now devoted to "concerted cultivation" (Annette Lareau's phrase). People are spending more years in college and graduate school. Their non-school hours are heavily programmed, from nursery school on, with soccer camps, music classes, internships, and travel. The age of marriage and first pregnancy is much delayed, and many 20-somethings are still living at home. Rates of drug and alcohol abuse have fallen. Parents and other institutions are making intensive investments in these young people's human capital--their capacity to compete in a global marketplace. It makes sense to me that activities such as voting and joining community organizations are simply being delayed, along with home-ownership, fertility, and graduation from the final stage of education.

On several occasions, I have heard marketing experts describe all of today's young people in these terms. The Millennials are said to be sophisticated, savvy, but also verging on spoiled--unsatisfied with courses and jobs that aren't highly stimulating and educational. The Millennials are also depicted as tolerant and comfortable with diversity.

There is a large element of class bias in these descriptions. One third of American adolesents do not complete high school, let alone graduate school. Public schools are somewhat more racially segregated than they were in the 1970s, so plenty of working-class rural and urban youth have little direct experience with racial diversity. There are precious few after-school opportunities in poor neighborhoods or chances for poor adolescents to interact constructively with adults. The age of first pregnancy has not been delayed, but rates of incarceration are sharply up.

Given this widening gap in formative experiences, it is not surprising that there is a growing disparity in civic engagement by class. In its 2006 report entitled Broken Engagement, the National Conference on Citizenship found (using data analyzed by CIRCLE) that there had been a steep decline in the proportion of Americans who said that they had “worked on a community project within the last year.” But the bulk of the decline had occurred among people without college degrees. “College graduates dominate everyday American community life; high school dropouts are almost completely missing. Half of the Americans who attend club meetings—and half of those who say they work on community projects—are college graduates today. Only 3 percent of these active citizens are high-school dropouts. Thirty years ago, the situation was very different. In 1975, only about one in five active participants was a college graduate, while more than one in ten was a high school dropout.”

Posted by peterlevine at 12:28 PM | Comments (0) | TrackBack

January 23, 2007

volunteering down in 2006

(from Wingspread, near Racine, WI) According to the Bureau of Labor Statistics, the volunteering rate for the United States as a whole slipped by 2.1 percentage points in 2006, having been stable for the previous three years. The Bureau adds, "The largest decline was among teenagers." This trend matches our surveys from 2002 and 2006, which showed a decline in youth volunteering after a long and substantial increase during the 1990s. The BLS and other federal agencies are not making much of the 2006 results, which appear on an obscure web page. That makes an interesting contrast with 2005, when the Corporation for National and Community Service announced: "Volunteering Hits a 30-Year High, New Federal Report Finds." As a matter of fact, the rate had not increased in 2005 compared to the plateau of the previous two years:

And now we see a decrease. For my part, I'm not convinced that the rate of volunteering is an important indicator. It tells us nothing about the seriousness of the work being done; and it arbitrarily excludes paid work of public value. I much prefer such questions as: "Have you worked with others to address a community problem?" But if we are going to draw a lot of attention to an increase in the volunteering rate (David Eisner called the level in 2005 "a once-in-a-generation opportunity to get more Americans engaged in making their communities stronger"), then we ought to pay equal attention to a decline.

Posted by peterlevine at 5:34 PM | Comments (0) | TrackBack

privilege, giving way slowly

Joseph Palmi: The Irish have their homeland. Us Italians have our families and our church; the Jews their traditions--hell even the [anti-Black slur] have their music; so what do you people have?Edward Wilson: We have the United States of America. The rest of you are just visiting.

That's an exchange from The Good Shepherd, Robert de Niro's movie about the origins of the CIA. Edward Wilson is a spy whose career begins in Yale's most famous secret society, Skull & Bones. Joseph Palmi is a Miami gangster whom Wilson will ask to murder Fidel Castro. If we take for granted the premise of the story--that a WASP elite once ran Skull & Bones, Yale, and the CIA--it's interesting to ask why things have changed. Yale is a private institution with a self-perpetuating board. Outsiders cannot vote unless they are invited in. The alumni have contributed Yale's $15 billion endowment and want their own kids to attend. Nevertheless, the place is no longer dominated by rich WASP families, lineal descendents of Edward Wilson's classmates. To be sure, the faculty remains about 90 percent white, and just one in four students is a minority, But the current president is named Levin, and his predecessor who presided when I arrived 21 years ago was one Angelo ("Bart") Giamatti. Why did families like Edward Wilson's cede any ground at all?

To some extent, Yale always had idealistic purposes: "light and truth," in the words of the motto. You can't pursue truth while restricting your faculty and student body to white Anglo-Saxon Protestants. But let's assume that Yale existed in part to help a WASP elite run America. Even if they some idealistic intentions, running the country was an exercise in power. They succeeded to a great degree, as symbolized by the three generations of the Bush family. Yet clans like the Bushes had to negotiate and share their power. Why?

It seems to me that they always needed Yale to confer status on their children. Any elite needs competent leaders, but the children of aristocrats will vary in skill and discipline. In a democratic society (no matter how imperfect) the elite's leaders need not only talent but also overt evidence of merit so that they can attract votes and legitimacy. A Yale degree signified that its holder had been selected from a large pool on the basis of personal excellence. Yale conferred no benefit unless people believed that it identifed the best. But after a while, the children of aristocratic families would have no plausible claim to excellence if they competed only among themselves. A young man would gain little status by entering Yale if the smartest people were going to City College of New York. So the trick was to let in enough Jews and Catholics--and then women, Blacks, Hispanics, and Asians--to make the place genuinely competitive while retaining as many spots as possible for old money. That was a hard process to control, because pretty soon there were Jews and Catholics, and then women, Blacks, Hispanics, and Asians, on the faculty, the Alumni Association, the Yale Corporation, and the president's office. The result was the balance we see today.

Posted by peterlevine at 12:01 AM | Comments (0) | TrackBack

January 22, 2007

problems with "stakeholders"

Today, my colleagues and I discussed a paper on assisted human reproduction in Canada. In passing, we learned that the Royal Commission on New Reproductive Technologies "took advice from about 40,000 individuals and organizations with interest [sic] in the matter"--collectively known as the "stakeholders." Consulting stakeholders is a popular way to enhance the quality and legitimacy of state decisions. It is most often used in the writing of regulations. Laws are written by legislatures, which claim legitimacy on the ground that their members have been elected. But laws always require detailed regulations; and regulators are not elected. Rulemakers and administrators appear more democratic if they consult "the stakeholders" before they make decisions.

Another phrase for "stakeholder" is "interest group." Whereas consulting people who have "stakes" in a given matter sounds wise, giving access to interest groups sounds problematic. Indeed, consulting organized interests raises several concerns:

1. A set of interest-group representatives (no matter how numerous) will not represent the whole population. To form an organization takes resources. Thus people with more money will have more interest-groups per capita. Also, people whose interests are more clearly defined and pressing will be more likely to organize themselves. For example, there may be lobbies for various types of medical specialists, but only a weak lobby for patients. Diffuse and subtle interests may be completely lost. For instance, as consumers of food, we might have interests when the government is considering health regulations. (Maybe more state funding for in-vitro fertilization means less funding for agricultural research.) But it is unlikely that a lobby would form to represent the health-policy interests of eaters.

2. Because of the "Iron Law of Oligarchy," the representatives of interest groups may not reflect the opinions of their own members. For example, someone who claims to represent thousands of nurses may not share the views of average actual nurses.

3. Most "stakeholders" arrive with instructions from their organizations. Sometimes those instructions are rather narrow. For instance, a stakeholder may work for a firm with a fiduciary obligation to maximize returns for its shareholders. When people hold rigid but conflicting instructions, they have trouble deliberating as a group. They may negotiate to get the best possible deal, but they cannot learn or change their aims in response to principled arguments. Faced with conflicting demands, public officials may well try to "split the difference." The result is policymaking as bargaining.

4. Impressing policymakers takes skill. The relevant skills are for sale. Groups with more money will have better powerpoint presentations, more timely polling data, a better grasp of the regulatory timetable and process, more contacts with other groups, and so on. Thus they will tend to prevail.

There are two alternatives to stakeholder consultations--neither of them foolproof. One is to force legislatures to make all the really serious and controversial choices. The other option is to delegate decisions to regulatory agencies but require them to consult representative samples of the public.

[Two classic treatments of this problem are Theodore Lowi's The End of Liberalism (1969) and Robert Reich's "Policy Making in a Democracy," a chapter in his 1990 edited volume The Power of Public Ideas.]

Posted by peterlevine at 1:30 PM | Comments (0) | TrackBack

January 19, 2007

on state apologies and the taking of offense

Around here, the facts of the following case have already been widely reported. But the short version goes like this ...

Virginia Delegate Frank D. Hargrove Sr., age 79, when asked about a proposed resolution to apologize for slavery, expressed his opposition and added, "black citizens should get over it." He then asked, "Are we going to force the Jews to apologize for killing Christ?" Several fellow members of the legislature remarked that his words were personally painful or harmful to them. Del. Donald McEachin "said that when he looks into the eyes of his 102-year-old grandmother, whose parents were slaves, 'quite frankly, it's hard to get over it.'" And Del. David Englin showed a picture of his 7-year-old son, saying that the boy is now "much more likely to be verbally attacked or physically attacked" because he is Jewish.

Hargrove replied that Englin's skin was "a little too thin." Speaker William J. Howell defended Hargrove and accused the press of having "blown [the story] out of proportion" in the hopes of sinking the Republicans with another "macaca" story. But Del. Brian Moran said, "It's not up to Bill Howell to determine whether it's been blown out of proportion. It's about the hurt that's been inflicted on others."

Hurt has been inflicted on others, but that cannot be the end of the story. It is theoretically possible for people to have excessively thin skin, or to take offense at valid statements. Or people may fail to be offended by remarks that are unjust. Furthermore, the issues that Hargrove raised cannot be assessed only by considering their emotional impact on people who have enslaved ancestors or Jewish children. I happen to have a 7-year-old Jewish child myself, but that doesn't give me special standing to complain--more than a WASP neighbor has. As a matter of fact, I find Hargrove's statements laughable and pathetic and likely to redound to the benefit of my favored political party. Thus I have not been hurt by them. But that doesn't make them acceptable.

We must decide whether his position on slavery is morally correct or just. (I concentrate on slavery, although he also seems to suggest views about Judaism.) In my view, an apology is appropriate and indeed obligatory. The apology would not imply that current members of the Virginia General Assembly own slaves or support the practice. Some members happen to be descendents of slaves. But the State of Virginia is a corporate entity that has been continuously operating since 1619. Each successive batch of leaders has taken over the assets and debts of the previous ones. For the first 246 of those years, the Assembly allowed slavery to exist in its jurisdiction. More than that, it enacted laws against fugitive slaves and abolitionists. It also directly profited by using slaves to build its own buildings and other possessions. An apology would come from that corporate entity and would go, not to people who happen to be descended from Virginian slaves, but to the deceased slaves and to the world. It would do nothing to right the original wrong. But if any situation requires an apology, this would seem to be it. And a deliberate refusal to apologize seems unjust--quite apart from whether that refusal happens to hurt anyone's feelings.

Posted by peterlevine at 11:02 AM | Comments (0) | TrackBack

January 18, 2007

a lesson on civil society and civic engagement

I was a guest lecturer this morning at the Washington Semester, an undergraduate program run by American University. (The other guest was Judy Woodruff, who showed some of her footage from the Generation Next television series. She has conducted great interviews with hundreds of young Americans across the country.)

I presented the 40 indicators of civic engagement that we combined to form America's Health Index for the National Conference on Citizenship. They are mostly survey questions that have been asked consistently for the last 30 years, such as "Have you worked on a community project?" "Did you vote in last month's election?" "Does your family usually eat dinner together?" and "Do you believe that most people are honest?"

When you put these indicators together into an index, you see a pretty steep decline. Of course, that is an artefact of which variables you include and how you weigh them. I simply went through the 40 indicators, one by one, and asked the class such questions as: "Do you engage this way?" "Do you think it's important for people to do this?" "Is it part of 'civic engagement?'" "Why do you think it has declined since 1975?" "Is the problem with motivations? Or opportunities?" "What should we do about the decline?"

Overall, I thought it made for a lively discussion that brought out many of the empirical and theoretical issues in the field.

Posted by peterlevine at 2:17 PM | Comments (0) | TrackBack

January 17, 2007

Judge Posner v. David Cole

The New York Review carries a dramatic exchange between Richard Posner (the amazingly prolific, polymathic federal judge) and Georgetown law professor David Cole, who had reviewed the judge's latest book.

Posner begins with a sentence that should shame him when he rereads it in a calmer mood: "Professor David Cole, who doubles as the legal affairs correspondent of The Nation and has received awards from the National Lawyers Guild and the American Muslim Council (founded by Abdul Rahman al-Amoudi, a supporter of Hamas and Hezbollah who in 2004 was sentenced to twenty-three years in prison for illegal dealings with Libya), is far to the left on matters of civil liberties and national security."

This is a lob over the net that Cole smashes back in Posner's face. "It is regrettable," Cole writes, "that a federal judge feels the need to engage in ad hominem accusations of guilt by association rather than simply responding on the merits to a critical review of his book." Cole (whom I happen to know and like) has received awards from conservative and non-ideological groups. Abdul Rahman al-Amoudi did not found the American Muslim Council (AMC), which in fact fired him. And "FBI Director Robert Mueller gave the keynote address at the AMC's annual convention in 2002, and defended his decision through a spokesperson by describing the group as the 'most mainstream Muslim group' in the United States. Smearing Muslim groups has become an obsession for some on the right, but I expect more from Judge Posner."

Advantage Professor Cole. But not game, set, and match. Posner's letter--if not his book, which I haven't read--presents an argument that Cole doesn't fully address. Posner's ad hominem, while shameful, doesn't invalidate his argument. I think it goes like this:

1. The merits of a decision always depend on the consequences, measured in terms of aggregate welfare. ("Consequentialism.")

2. The judiciary has the role in enforcing certain abstract and universal principles that are constraints on the other branches of government. The justification for this role is consequentialist. Our overall system works better when certain rules are consistently enforced.

3. However, applying a rule against warantless electronic surveillance would have consequences that are difficult to predict. Although the consequences might be positive, they might also be negative. Judges lack the expertise to make reliable predictions about such matters. Therefore, decisions should be left to the elected branches. Likewise, Posner says that he is personally opposed to banning "Islamic rhetoric," but his reasons are consequentialist, and he would yield to better informed officials in the elected branches.

4. If judges do decide to impose general rules or principles in these areas, they impose their arbitrary wills or make implicit cost-benefit calculations for which they are unqualified. There are no right answers to controversial and contested issues involving the U.S. Constitution, because "the text is very old and to a degree obsolete, tradition is a mixed bag (the Alien and Sedition Acts and Lincoln's suspension of habeas corpus in the Civil War are part of the tradition), the precedents are mixed as well and many Cole rejects, and 'reason' as lawyers use the term is in the eye of the beholder."

David Cole provides some sharp specific answers to points in Posner's letter, but I think he misreads the judge in part. For example, Cole writes, "As for ethnic profiling, far from criticizing it, Judge Posner's book concludes that it is perfectly constitutional." Right--I think Posner would say that he doesn't favor ethnic profiling yet he believes the decision should be made by Congress and the president. In other words, it should be constitutional. Maybe that's wrong, but it's not illogical.

There are several options for responding directly to Posner:

1. Constitutional reasoning is not arbitrary. Yes, there are disagreements in the present, and the record of past interpretations is mixed. But the same could be said of science, yet most of us don't conclude that scientists make merely arbitrary judgments. The exercise of legal reasoning (which is informed by, but not identical to, moral reasoning) can yield correct or incorrect results. The correct result in a case of warrantless electronic surveillance is that it is unconstitutional. (Reasons must then be given to show that this result is correct.)

or

2. The moral worth of a policy or decision is not measured by its consequences. What is right is the application of valid moral rules or principles. Therefore, it's beside the point to say that free speech by Islamic radicals may undermine security (even if that were true). Security is not the point; free speech is. To make that argument plausible, one must ground freedom of speech in something deeper, such as human autonomy and dignity.

or

3. Perhaps there is an element of arbitrariness in judicial decisions. And perhaps the right question is whether the judiciary enhances aggregate welfare. Nevertheless, our overall system works well because of checks and balances. Both elected branches of government are prone to majority tyranny. They can undermine aggregate welfare by discounting the rights of minorities. For example, if the revolting stories of torture described by Raymond Bonner in the same issue of TNYRB are true, then clearly the CIA did much more harm than good in those cases. It is the special role of the judiciary to look out for minorities whose interests might be trampled. The judicial method is to apply abstract principles that limit government. The net results will be positive.

Posted by peterlevine at 10:16 AM | Comments (3) | TrackBack

January 16, 2007

noise pollution

I'm at the gate at Atlanta Airport, trying to read, write, and (possibly) think. CNN is blasting in the background and competing with Norah Jones, whose voice emerges from the p.a. system right behind me. Should I wish to follow the CNN anchorperson's train of thought--such as it is--I would have a hard time. Every few seconds a special security announcement interrupts the TV to remind us that the current threat level is orange. Beeping trucks pass by, travelers are called urgently to board, and people shout into their cell phones. Not a single person in this crowded lounge is actually watching the TV, but some have books open on their laps. Silence would be delicious.

Posted by peterlevine at 6:32 PM | Comments (0) | TrackBack

January 15, 2007

why colleges should embrace a civic mission

I'm traveling today to Oglethorpe University in Martin Luther King's city of Atlanta. Oglethorpe has begun a major initiative to incorporate service and civic engagement into the whole experience of its students. I'm going to moderate a day of discussion for the faculty. I won't talk much: I want to listen and help the professors to develop their own ideas. However, I have promised a brief opening presentation about why colleges and universities should embrace their civic missions. My outline follows:

In the 19th century, citizenship and higher education went together. The good citizen, like the good college graduate, knew his or her duty and did it.

In politics, for example, voting was a public act. You voted for your party�s candidate, and your neighbors watched you do it. Most people were brought up within a party and were expected to stay loyal to it. The parties were defined by ascribed identities that were difficult for individuals to escape: class, race, religion, and region.

Newspapers routinely mixed editorializing with factual reporting, and each one aimed at a specific identity group. They advocated fiercely.

In colleges and universities, there was little choice among courses. Most college presidents were clergymen; teaching involved lots of moral exhortation. Moral development was a central function of the institution. Student bodies were homogeneous--often from the same denomination, race, and community. Oglethorpe University had a rather typical founding purpose: to train young men of Georgia to be ministers for the Presbyterian Church.

A new model of citizenship arose in the 20th century, and a new form of college and university developed to embody it. Now the good citizen was an independent, informed maker of free choices.

In politics, voting became a private act (thanks to the secret ballot). Because voting for a party is a crude way to choose one's political preferences, there were efforts to disaggregate the choice. Party-line voting was discouraged; citizens were supposed to choose individual candidates. The referendum, initiative, and recall were launched.

The best newspapers now aspired to neutrality and separated fact from opinion. Their role was to inform the private reader who would then make choices.

Higher education changed accordingly. Students were given choices among courses and majors. Professors won autonomy and academic freedom. Knowledge and critical thinking became the chief educational goals. Graduates were supposed to choose their beliefs, their political preferences, and their social roles based on information. Indoctrination was seen as a fault, and as a result there was much less moral exhortation.

This model reached its apogee soon after World War II. The Oglethorpe Idea (launched in 1944) was unusual in that it put an emphasis on "citizenship." More typical was a statement by the University of Chicago's president, Robert Hutchins, in 1933: "'education for citizenship' has no place in the university." Hutchins led a modern research university devoted to dispassionate academic study.

This ideal came under attack after the War:

Conservatives noted that the alleged neutrality of the modern university was misleading, because the curriculum and ethos were pervasively secular. (See William Buckley, God and Man at Yale, 1951). Liberals and leftists noted that the supposedly independent and neutral university won contracts from the Defense Department and prepared its graduates to run corporate America. It was also the gateway to the middle class, yet its admissions decisions were hardly neutral. Others (regardless of ideology) argued that a university devoted to choice lacked any central purpose. It had become a hollow shell without a meaningful set of values that could orient young people.

Meanwhile, there were gradual but substantial declines in the actual proportion of Americans who were participating in public life. Presented with a free choice among civic or political groups and causes, many chose not to engage at all. Between 1975 and 2005, the decline was 14% for belonging to at least one group, 31% for being interested in public affairs, 38% for working on community projects, 38% for regularly reading the newspaper, and 44% for attending community meetings.

Clearly, higher education did not deserve all the blame for this disengagemrnt. (Indeed, people without college degrees were the most likely to drop out of public life.) But higher education wasn't doing enough to help--to develop interests, skills, and habits of participation.

Colleges and universities also had another good reason to worry about civic engagement. They were now trying to attract and retain a broader range of students, many of whom were not comfortable or motivated in institutions devoted only to academic knowledge and critical thinking. These students needed to see applications and purposes for what they were learning.

Therefore, a new set of teaching practices have developed that go beyond both the 19th and the 20th century university. These practices include service-learning, community-based research, living/learning communities, and exercises in public deliberation.

At their best, these forms of education avoid indoctrination and mere moral exhortation. They prize and teach critical thinking and independence. Nevertheless, they deliberately develop skills, habits, and values that will connect graduates to public life. In short, they don't tell students what to think about controversial issues, but they do train them to think, to care, and to act on public matters.

Some controversies and challenges to consider:

Can civic education avoid indoctrination? [Stanley Fish says no: "Universities could engage in moral and civic education only by deciding in advance which of the competing views of morality and citizenship is the right one, and then devoting academic resources and energy to the task of realizing it. But that task would deform (by replacing) the true task of academic work: the search for truth and the dissemination of it through teaching."] Are colleges and universities competent to develop character, especially if such education requires them to work with non-academic institutions and communities? Is it fair to put a university's resources into service or civic education, when students have paid their tuition and alumni have given money on the understanding that the institution is devoted to academic study?

Posted by peterlevine at 8:41 AM | Comments (0) | TrackBack

January 12, 2007

the president and the Constitution

One letter in today's New York Times says: "More than 20,000 additional troops are being put in harm's way on the say-so of one man. Isn't that more characteristic of a dictatorship than a democracy?" Another writer asks, "Are we totally helpless against this man who seems more like an arrogant, power-hungry dictator than a president?" Meanwhile, over at Balkinization, Prof. Sandy Levinson has been arguing that the Constitution is flawed--for many reasons, but in particular because it provides no means to remove the "catastophic" President Bush before his term ends.

I'm against the escalation and regard the current war as a fiasco. But I don't think we have a dictator, nor should we rush to amend the Constitution.

We elected the president who launched the Iraq war and the Congress that authorized it. The president was reelected in 2004 because he was an incumbent in the middle of a war and the opposition failed to make a persuasive case for change. Most voters then gradually lost faith in the war and ultimately elected a new Congress that no longer has a pro-war majority. This Congress could block the escalation; it could even end the war. It will certainly exercise more oversight. But at this moment it is divided on whether to end the war quickly, as are the American people.

In short, the president does not control the political machinery. Congress has at least as much clout; it simply isn't sure whether to use it. The American people have the right and capacity to apply pressure through protests and advocacy; most are not doing so. Thus the president is not a "great opposeless will," a dictator. He is still making the short-run decisions because his opponents have calculated that they would prefer to let him do so than to take the heat for blocking him.

Our government is designed to respond to public opinion, but at a deliberate pace. For example, the bicameral legislature and the filibuster rule in the Senate slow the rate of change, and they are making it difficult for the Democrats to pass legislation to block the "surge." Right now, the pace is frustrating. But those safeguards were valuable in the immediate aftermath of 9/11, when I fear that majorities wanted to take even more drastic and aggressive action.

Jack Balkin recommends a new check on the president: a vote of no confidence. George W. Bush would lose such a vote--if not now, then soon. But I suspect that LBJ, Carter, Reagan (after Iran-Contra), and Clinton also would have faced serious efforts to remove them before the ends of their terms. That's five of the last seven presidents--the others being Ford and George H. W. Bush, who were defeated for reelection. I doubt we'd be better off with an executive so severely weakened.

Posted by peterlevine at 2:51 PM | Comments (1) | TrackBack

January 11, 2007

consequences of particularism

I drafted a paper more than a year ago that drew some political implications out of a philosophical doctrine called "moral particularism" (click for pdf). I haven't had a chance to improve and expand that paper for publication. It actually covers a huge amount of ground very thinly (which makes it inappropriate, in its current form, for academic publication). Here are a few key ideas:

Some concepts have the following features:

1) They are morally important. When they show up as features of a situation, they usually ought to influence our moral judgment, albeit in conjunction with other features.

2) These concepts lack consistent moral "valence." Depending on the situation, they can make it worse or better. There are no general rules that reliably tell us what their valence will be in all the instances of a certain description. By way of analogy (which I owe to Simon Blackburn), we can't tell in advance--or by means of a principle or rule--whether a splash of red paint will make a painting better or worse. That is because the proper unit of aesthetic analysis is the whole painting, not an area within it. Nevertheless, a splash of red paint is important to the overall beauty of a painting. It might ruin a Vermeer but save a De Kooning. Likewise, we can't tell whether love makes a situation better or worse; but it usually matters.

3) These concepts are indispensable. We cannot resolve moral questions appropriately by appealing only to concepts that avoid 1) and 2).

I think these three features apply to all of the traditional virtues and vices: courage, pride, partiality, respect, and many more. As an example, consider love. Love is morally significant and can be either good or bad depending on the situation. The question is whether we can use a rule or principle to delimit the good cases of love from the bad. Then we could replace the ambiguous word "love" with two words, one for the good form and the other for the bad. (Or there might turn out to be more than two subsets of love.)

I don't have a proof that such an analysis must fail. But I doubt that it can succeed, because I suspect that we humans happen to have an emotion, love, that can be either good or bad, or can easily change from good to bad (or vice-versa), or can be good and bad at the same time in various complex ways. Even when love is good, it carries some problematic freight because of its potential to be bad. And when love is wrong, it nevertheless has some redeeming qualities because it is akin to love that is good. I think the instinct to divide it into eros and agape or other such subcategories is fundamentally mistaken.

(That does not mean, however, that there is no difference between good and bad love. Moral judgment is necessary, but it has to be about situations, not about concepts in the abstract.)

Numerous implications follow from this doctrine, but the one I want to mention here is political. For a particularist, there can be no technique for resolving moral questions that is analogous to the techniques of economics, engineering, or law. First, there cannot be a computational method (such as the one that utilitarianism promises) because that would presume that one consistent good, such as happiness, is the only concept that counts. The particularist replies that other concepts must also be considered, and they happen to be unpredictable. Second, the particularist doubts that we can develop a set of sharp and valid moral definitions or principles and then apply them to cases. Although some moral concepts may be definable in ways that give them consistent moral valence, others cannot.

Thus there is no expertise or procedure that will yield wise moral judgments. However, particularism is consistent with public deliberation. When people discuss what should be done, they apply rules and principles--sometimes validly and sometimes not. But they also tell stories so as to bring out the salient features of a situation and depict those features in a positive or a negative way. They place particular aspects of the situation in various contexts. And they bring out themes (not rules or principles but repeated motifs of moral significance). In making such arguments, they apply their distinct backgrounds and perspectives. This is the best form of moral reasoning, assuming that particularism is right.

I do not assume that everyone has equally valid and useful points to contribute in deliberation. Yet we should allow everyone to participate because: (a) rules that exclude some and favor others tend to be biased--merely to serve special interests, and (b) many more people have valid moral insights than one might think. Thus particularism does not imply egalitarianism, but it counts in its favor.

Posted by peterlevine at 11:53 AM | Comments (0) | TrackBack

January 9, 2007

legislative strategy and the "surge"

For the good of the country, Congress should probably block any increase in the number of troops sent to Iraq. The most effective way to do that would be to add an amendment or rider to a military appropriations bill, because the president must sign that legislation. From a partisan political standpoint, however, the Democrats are probably better off objecting to the "surge" without actually blocking it with a rider. If they stop the president from fighting the war as he wants, he can blame them for the ultimate debacle in Iraq. If they use the "power of the purse" to stop the surge, their critics can say that they failed to fund our soldiers. On the other hand, if they allow the president to proceed with his surge over their objections, the blame will rest with him.

I'm for principle and national interests rather than partisan advantage and the avoidance of blame. However, I doubt that the Democrats have the votes to pass an anti-surge amendment in both houses of Congress. Therefore, principle will not prevail. Would the following idea work instead? Congress would pass the appropriation that the president requests (to fund our troops fully) and then debate a separate bill to prevent any additional Americans from being sent to Iraq. Of course, the president would veto that bill--if it passed--and would then implement the surge. Yet there would be several advantages to passing separate legislation. It would show that responsibility for the surge rested with the president. Arguably, Congress would discharge its duty by debating and (I hope) voting against troop increases. And Democrats from strongly anti-war districts would have an opportunity to cast a clear vote.

I''m not sure why Senator Kennedy introduced a bill "to prohibit the use of funds for an escalation of United States forces in Iraq above the numbers existing as of January 9, 2007." I would much prefer legislation that avoided any mention of "funds" and simply said, "To prohibit the escalation of United States forces." I suppose Senator Kennedy wants to stay on safer constitutional ground by invoking the congressional power of the purse. He may wish to avoid the argument that the president alone may decide how to conduct a war. But that argument is questionable. In any case, the president will veto Kennedy's bill unless it becomes an amendment to an appropriations bill. It might as well be written so it says what it should: No surge.

Posted by peterlevine at 9:58 PM | Comments (0) | TrackBack

Cole Campbell, 1954-2007

Cole Campbell died suddenly last Friday in a car accident. Cole had been editor of The Virginian-Pilot and The St. Louis Post-Dispatch, where he introduced and developed the concept of "public journalism." Cole and his reporters did not take for granted that there was a "public" (in John Dewey's sense) for their work. In other words, they did not assume that there were people out there who showed interest in public issues, who talked with one another, and who belonged to effective groups. In fact, all such forms of political engagement were in decline--just as newspaper readership was falling. In response, Cole and other practitioners of public journalism created neutral forums for public discussion. They stimulated interest in civic participation by covering civil society (not just campaigns and politicians). They changed daily practices in the newsroom. For example, instead of automatically looking for controversies and problems, they would sometimes celebrate consensus and civic assets. They also found new sources: civic leaders who didn't hold official titles. In short, Cole and his reporters redefined "the news" and redesigned the newspaper to promote civic life.

I knew Cole pretty well for more than eight years. I brought him to Maryland once and attended many meetings and conferences with him. He was a live wire--funny, interesting, provocative, intellectual, a voracious reader, and always very full of life. In his gig as a dean of journalism, he undertook a typically creative experiment. I believe that most or all of his students were working together to build an impressive online resource about the environment of Lake Tahoe. It was, characteristically for Cole, a gift to the public.

Incidentally, I thought the New York Times' obituary, written by David Cay Johnston, was quite good. Johnston quoted several people I would have recommended as experts on Cole's contributions to journalism. This is only surprising because the Times never did any public journalism itself. Apparently, they have in-house knowledge of the movement. (For fine, personal obituaries by people who really knew Cole, see Rich Harwood and Noelle McAfee.)

Posted by peterlevine at 7:28 AM | Comments (0) | TrackBack

January 8, 2007

five things about me

Russell Arben Fox has tagged me in a game that is going around the blogosphere. I'm supposed to write "five things you don't know about me." Here goes:

1. I used to live with Marcel. Marcel was once a beloved baby elephant at the Paris Zoo. During the Prussian siege of 1870-1871, the famished Parisians were forced, much to their sorrow, to eat Marcel. They retained his skin, which was stuffed with a beer barrel and straw. After some years of posthumous service in a Paris bar (beer came out of his trunk), Marcel was moved to London. He belonged to the owners of an apartment near Victoria Station that my family rented in 1979-81.

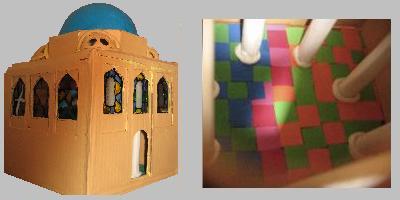

2. My 7-year-old daughter and I have constructed what we call our "mosque." It is about 14 inches high. It isn't really a mosque, because it lacks a mihrab (to orient people for prayer) or a minbar (the Islamic equivalent of a pulpit). That's probably just as well; it might seem disrespectful for two unbelievers to build a mosque for play. Our motives were the opposite of disrespectful. We (or at least I) love Islamic architecture and wanted to figure out how to construct a public building--which could be a bath, a school, or a library--in the 16th-century Ottoman style.

3. In the 1990s, I used to play the clavichord. It is one of the two quietest instruments I know, the other one being the lute. If an air-conditioner is running in the same room with our clavichord, you can't hear a note from more than three feet away. Its low volume was an attraction for me, because we live in a small apartment. So was the fact that J.S. Bach would have used a clavichord in his home. Tuning it, however, is so time-consuming that I have mostly given it up. (I did receive a didgeridoo for Christmas last year, but that's mostly for looking at.)

4. I basically identify as a Jewish American, a grandchild of immigrants. But it turns out that my oldest American ancestor, by way of my mother, was one Isaac Learnard, who died in Chelmsford, Mass. anno domini 1657.

5. I am color-blind and can hardly sing a note. (Or, even worse, I can only sing one note.) Yet I love music and painting. Would I enjoy these arts less if I could actually perceive them?

I tap phronesisaical.

Posted by peterlevine at 7:05 AM | Comments (0) | TrackBack

January 5, 2007

understanding knowledge as a commons

Charlotte Hess and Elinor Ostrom have just published a volume entitled Understanding Knowledge as a Commons: From Theory to Practice. The following is a brief excerpt from my own chapter that refers to several other contributions and introduces the idea of knowledge as a commons:

Just as a village common is composed of shared grass, a knowledge commons is composed of shared knowledge. Hess and Ostrom note that knowledge involves discrete artifacts (such as articles, maps, databases, and web pages), facilities (such as universities, schools, libraries, computers, and laboratories), and ideas (such as the concept of a commons itself). Thomas Jefferson already realized that ideas are pure public goods, for "he who receives an idea from me, receives instruction himself without lessening mine; as he who lites his taper at mine, receives light without darkening me."

Facilities are usually rivalrous, yet they can be run as commons and can house shared artifacts--as Benjamin Franklin demonstrated when he founded the first public lending library. Both the library building and its collections were shared, even though they were scarce and rivalrous.

In the age of networked computers, many artifacts that would have been rivalrous can be digitized, posted online, and thereby turned into public goods. Computer networks can themselves be seen as facilities that overcome some scarcity problems. The number of potential exchanges among people (or machines) that are linked in a network rises geometrically as the network adds members. Therefore, the more users, the better the network serves each user as a tool for communication and research.

I admire commons such as public libraries, community gardens, the Internet, and bodies of scholarly research because they encourage voluntary, diverse, creative activity. However, I have distinguished between a libertarian commons and an associational commons. In a libertarian commons, anyone has a right to use (and sometimes also to contribute to) some public resource. This right is de facto if no one is able to block access to the good or if no one chooses to do so. The right is de jure if it arises from a law or policy that guarantees open access. In contrast, an associational commons exists when some good is controlled by a group. As [James] Boyle notes, "the commons is not the same as the public domain; successful commons are frequently characterised by a variety of restraints--even if these are informal and collective, rather than coming from the regime of private ownership."

There is an important category of commons that are owned by private nonprofit associations. The owner (a formal organization) has the right and power to limit access, but it sees itself as the steward of a public good. As such, it sets policies that are intended to maintain a commons. For example, an association may admit anyone as a member, on the sole condition that he or she protects the common resource in some specified way. (Libraries tend to function like this.) Or a group may only admit those who have special qualifications, but impose obligations on its members in order to enhance the public good. (Scientific and professional associations often use this model.) Religious congregations, universities, scientific organizations, and civic groups differ in their rules and structures, but they often have this function of protecting or enhancing a quasi-public good.

[I then defend the associational commons and argue that to sustain it, we must find ways to include young people in its governance. That has been the agenda of our small local experiment, the Prince George's Information Commons.]

Posted by peterlevine at 1:34 PM | Comments (0) | TrackBack

January 4, 2007

the Maryland Civic Summit

Annapolis, MD: We at CIRCLE helped to plan the State of Maryland's Summit on Civic Literacy, which occurred today. The Summit was funded and charged by an act of the State Assembly. There were representatives present from the State Senate and House, the judiciary (including the Chief Judge), the State Department of Education, and various key nonprofits. We heard a great keynote talk by my University of Maryland colleague James Gimpel, the lead author of one of the best books about how young Americans develop into citizens, Cultivating Democracy: Political Environments & Political Socialization in America (Brookings, 2003). Jim was quite eloquent about the enormous educational disparities between inner-city Baltimore and the suburbs of Washington, DC. See his book for vivid details.

The afternoon's session was devoted to deliberation. We formed policy recommendations for the Assembly to consider. I moderated the discussion. Participants were supposed to vote electronically using touchpads, but the equipment didn't work. No matter; we still deliberated and recorded everyone's preferences. My two favorite ideas (but not the top vote-winners) were:

1) Collect data about the after-school opportunities that are available to our students throughout the state. I suspect that this research would identify big disparities and thus make the case for significant legislation.

2) On a pilot basis, create a few new positions in select schools. These new employees would connect students to external opportunities--field trips, special programs, internships, service projects, etc. (It can be very hard for outside institutions to navigate schools, and vice-versa; but museums, courts, colleges, environmental organizations, churches, and many other groups have educational opportunities to offer.)

Posted by peterlevine at 5:20 PM | Comments (0) | TrackBack

January 3, 2007

what should we say to our soldiers in Iraq?

What should Americans who oppose the current war say to men and women who have served in Iraq, or to their families and close friends? I think the standard response is to sympathize with them, on the ground that our civilian leaders made a colossal mistake by sending them into danger and hardship with a foolish plan and insufficient justification. In a word, our service-people are victims.

That attitude must strike almost all troops--even those who oppose the decision to invade Iraq--as patronizing. If the whole war is nothing but a mistake, and our troops are mere victims, then everything they strive to accomplish from day to day is pointless. It doesn't matter whether they do their job excellently or perform it negligently. If such pity prevails on the left, we may face a long period of division and backlash.

I suggest an alternative view. In my opinion, the war was unjustified and its conduct was atrocious. However, it is crucial that the United States possess a lethal, efficient, professional, volunteer military under civilian control. Sometimes our elected leaders (with perhaps some help from the top brass) will make big mistakes in deciding how to use lethal force. Their mistakes may be strategic or moral; they may be sins of commission (e.g., Iraq) or of omission (e.g., Rwanda). The proper response is always to criticize our leaders and to offer persuasive alternatives in elections--something that the Democrats failed to do in 2004.

Meanwhile, by doing the best possible job under the circumstances, the professional military serves our democracy. Our officers and enlisted people learn; they develop experience. They save one another's lives. Through their daily choices, they can mitigate the harms caused by the elected leaders to whom they must defer.

Isn't there a point at which a person in uniform must nonviolently resist his or her government? Shouldn't an officer's conscience obligate him or her to resign? The answer is yes, but only in extreme circumstances. Hitler's General Staff should have resigned, even if that meant death to them personally. But there is a fundamental, categorical, moral difference between invading Poland and invading Iraq; between Auschwitz and Abu Ghraib. While I oppose the Iraq war--more clearly in hindsight than ex ante--it wasn't an infamous act. It reflected poor judgment, worse execution, and a questionable mix of motivations, but not a giant war crime.

In any case, I would set the bar for civil disobedience rather high for uniformed officers in an all-volunteer military that serves a democracy. Otherwise, every time the civilian leadership makes a moral mistake, the officer corps must all quit and we will have to start over. We need them to develop experience, to look out for their troops, to obey military ethics, and to improve the institution of the military.

In considering whether to use civil disobedience--for example, whether to resign a commission--one must consider the consequences, all things considered. It is not clear to me that resignations would shorten this war, especially since the public has already awakened and is demanding peace. (By the way, a military resignation need not be accepted.)

When the United States is judged for its decision to invade Iraq, it will not count in our favor that our soldiers learned from their experience there. We have no right to hone our own institutions at the expense of another people. But the blame must fall on our elected officials, on us for electing and re-electing them, and on the political opposition for its poor leadership. Our soldiers who do the best possible job under the circumstances may take genuine pride in their service; and we owe them a full measure of respect and gratitude.

Posted by peterlevine at 7:31 AM | Comments (1) | TrackBack

January 2, 2007

my 1,000th post

This is post number one thousand, or so my blogging software (MovableType) tells me. Next Monday, January 8, this blog will turn four years old, which makes it--if I may say so myself--a hardy survivor.

I like to say that I have posted every working day since January 8, 2003. I think that claim was accurate until just last week. I returned to work last Wednesday but couldn't write anything here because I was in the middle of upgrading the software to MovableType3.3 and I hit some snags (due to my own incompetence). It was a frustrating feeling to be able to see the blog online but not to change it in any way. As is so often the case, I upgraded not because I was dissatisfied with the existing software, and not because I wanted to do something new that was impossible with the old version, but only because my site was vulnerable to malicious behavior and I had to install some new security fixtures. I'm all for open networks that allow everyone to innovate; but they do have their drawbacks.

Posted by peterlevine at 12:35 PM | Comments (0) | TrackBack

January 1, 2007

populism

I have collected some of my past posts--as well as an important guest post by Harry Boyte--under the new category of "populism." I've done that partly because Harry has persuaded me that "populism" is a helpful name for some of my core philosophical commitments. Meanwhile, I've come to think that we need to reclaim the full meaning of "populism" at a time when people described as populists are back in the news. I'm thinking of Sherrod Brown, who won the Ohio Senate race by opposing free trade and globalization; John Edwards; and the Venezuelan president Hugo Chavez. In the debate about these men (different as they are) the question is about redistribution: Is it politically smart and morally right to use the power of the state to help working-class people economically, possibly at the expense of the rich? (See Taylor Marsh or the Hope Street Group.)

Actually, I would vote in favor of redistribution, because I think that reasons of prudence and justice favor it. However, I'm not sure that it's a winning political strategy, given the public's understandable distrust for the state. Nor does redistribution exhaust the value of populism and popular sovereignty. There are five other dimensions that are at least as important in populism's heritage and theory:

1) Popular participation in government and civic life. This means not only high voter turnout but also opportunities for constructive engagement at all levels, from school boards to federal agencies. Real "populists" should revive such opportunities, which have shrunk. For example, according to Elinor Ostrom, the percentage of Americans who hold public office has fallen by three-fourths since mid-century, thanks to the consolidation of local governments, the growth of the population, and the replacement of elected or volunteer officers by experts.

2) The capacity to create public goods. The most popular examples today are online: for example, YouTube--whose voluntary users have created and given away $1.65 billion worth of products--and Wikipedia, another voluntary, collective enterprise whose market value is unknown but whose worth is inestimable. Such collective work is an old American tradition, as Toqueville recognized in the 1830s; and it occurs offline as well as on the Internet. Policies can either frustrate or support such popular creativity; supportive policies are truly "populist," even though they are not redistributive.

3) A quality dimension. True populism doesn't pander to or romanticize the public. It recognizes that the great mass of people have latent or potential capacities for true excellence, but we need appropriate opportunities, incentives, organization, support, and education to realize our civic and political potential. That said, populism also rejects cynical and dismissive views of the American people as we are today (such as this).

4) Respect for diversity. Some populists assume that there is a homogeneous mass of "ordinary" or "real" people, as opposed to special interests, elites, and various other minorities--including immigrants. But there is an equally prevalent and far more attractive tradition of American populism that identifies the people with diversity. This is the populism of the 1890s at its best, of folk music, of the Popular Front, and of the Civil Rights Movement. I am aware that 1890s populism turned exclusive and Soviet Communism influenced the Popular Front; but both movements also had truly pluralist strains.

5) A cultural dimension: Populism is not only about laws and policies, but also a way of representing ourselves. In a populist culture, many people are involved in celebrating, memorializing, and debating their common values and hopes through cultural products such as music, graphic arts, folklore, historical narratives, and videos. The results are diverse but serious; people use the arts to define and address public problems. Today, in my opinion, the biggest obstacle to cultural populism is mass culture (which is popular but not participatory), and the greatest hope lies in collective voluntary work.

Posted by peterlevine at 11:14 AM | Comments (2) | TrackBack